Speeding up the JavaScript ecosystem - oxlint and oxfmt

tl;dr: Future versions of oxlint and oxfmt will be ~50% faster on projecs with many (>=50k) directories.

- Part 1: PostCSS, SVGO and many more

- Part 2: Module resolution

- Part 3: Linting with eslint

- Part 4: npm scripts

- Part 5: draft-js emoji plugin

- Part 6: Polyfills gone rogue

- Part 7: The barrel file debacle

- Part 8: Tailwind CSS

- Part 9: Server Side JSX

- Part 10: Isolated Declarations

- Part 11: Rust and JavaScript Plugins

- Part 12: Semver

- Part 13: oxlint and oxfmt

At a certain scale, the characteristics of software development change. We are seeing a massive surge in both the size of repositories and the volume of PRs. Consequently, the sheer number of CI runs required to keep a project moving is exploding. This demand for speed is being further accelerated by more and more work being done in parallel on a local machine. For these reasons, tools need to be as fast as possible.

The oxc project is a top contender for becoming the standard tooling platform of choice for this reason. Its Rust parser is well-engineered for performance. It has received an immense amount of scrutiny on that front already.

When I ran both oxfmt and oxlint on codebases with a high directory-to-file ratio something didn't feel right.

Why are you idling, CPU?

Oxc’s parser is benchmarked to process hundreds of megabytes of JavaScript per second. On a modern machine with 12+ cores and a high-speed NVMe drive, a codebase of this size should have been a sub-3-second job. At that speed, you expect the CPU fans to kick in immediately as every core is saturated with parsing and linting logic.

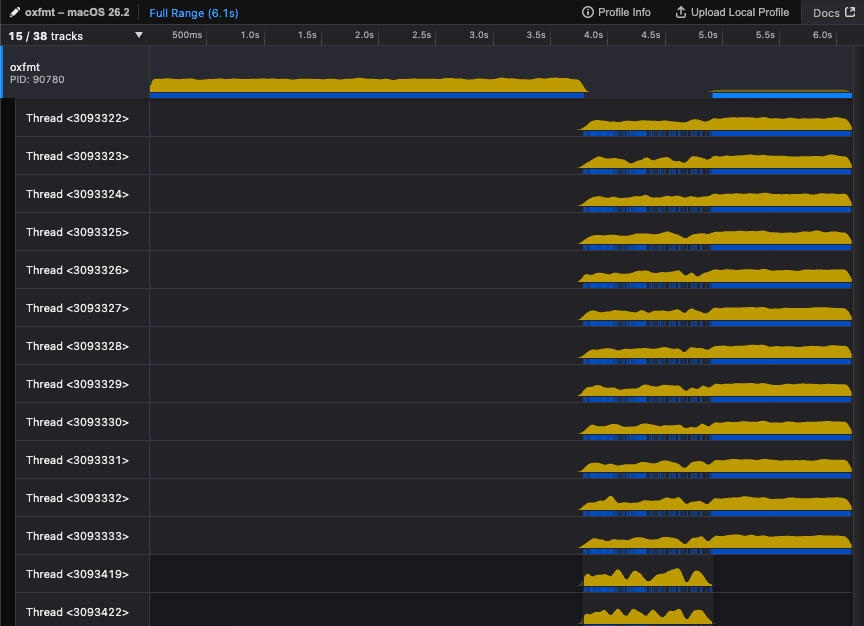

Instead, the execution felt "lazy", coming in at around 6s. Let's fire up a profile to see what's actually happening.

Welp, that looks like everything is waiting on something. A "healthy" profile would show work being done on every lane which would signal that my machine is being used to the fullest. But that's clearly not the case here. Nothing in the first block was about doing any actual work. No formatting or linting was happening there. Something much more innocent looking was running here: Config file discovery. Huh?

The syscall ghost

What's clear from this profile is that internally oxc was working in phases: Discover config files, then build up a sort of "work map", and then do the actual work based on that. Like most tools oxfmt supports several config files:

.oxfmtrc.json.oxfmtrc.jsoncoxlint.config.ts

These config files can also be nested. The inner config file takes precedence over any configs in parent directories.

The straight forward implementation to write this sort of code is to do a simple loop and check for each file whenever you encounter a directory.

// SLOW: Each .is_file() is a syscall (stat/metadata)

for path in [

dir.join(self.config_file_names.json),

dir.join(self.config_file_names.jsonc),

dir.join(self.config_file_names.js),

] {

if path.is_file()

&& let Some(config) = self.discover_config_file(&path)

{

configs.push(config);

}

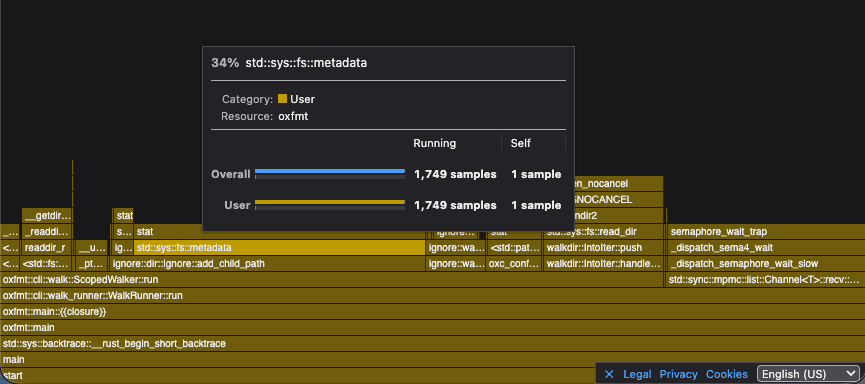

}This part is very visible in the profile:

Like most programming languages, rust makes this code kinda innocent looking. But we have a big performance issue here hiding in plain sight. Every .is_file() call is doing a syscall underneath. A syscall is way more than just a function call. It is a full-blown context switch from user mode to kernel mode.

When you ask the OS if a file exists, your process has to stop what it's doing and hand control over to the kernel. The kernel then has to:

- Validate the path string

- Traverse the filesystems's internal directory structure

- Check security permissions

- Interact with the disc controller (in case it's not already in cache)

- Context switch back to your process with the result.

Even if the filesystem metadata is hot in the OS cache, you are still paying the penalty of crossing that boundary. In a tight loop, this is a performance killer. When we multiply this tiny overhead by 150k sequential calls, we create a massive wall of latency. Our process was forced to wait for the kernel to finish its paperwork.

Zero cost discovery with readdir

Looking at the traversal code, we were asking the OS questions that we already had the answers for. To traverse over a directory structure the readdir syscall is typically used which returns a list of directory entries. Those entries contain the filename already.

// FAST: Zero syscalls. We are just checking strings in memory.

for entry in entries {

let filename = entry.file_name();

// Checking filenames here is a simple string comparison

if (filename == self.config_file_names.json

|| filename == self.config_file_names.jsonc

|| filename == self.config_file_names.js)

&& entry.file_type().is_file() // usually provided by readdir for free

{

if let Some(config) = self.discover_config_file(entry.path()) {

configs.push(config);

}

}

}What's even better is that we also got rid of all the path construction calls we had earlier: dir.join(...). With 50k directories the old code would trigger 150k syscalls, regardless of whether there were any config files or not. The new code never triggers a syscall when there is no config file. When there is a config file it will only trigger as many syscalls as there are config files, never more. This is a giant leap forward in terms of performance.

Getting rid of phases

The final piece of the puzzle is the code being structured around phases. Since we know that config files can only affect files in the current directory or below, we can process files in the same traversal that we're using for config discovery. No more separate config file prescan. By fusing these steps we eliminated the startup period.

Remember: Syscalls are expensive

When it comes to syscalls modern programming languages make it easy to overlook expensive syscall code. In any high-level language, path.is_file() looks like a simple, harmless boolean check. But beneath that clean abstraction lies a heavy, expensive context switch to the kernel. This is a fun one because when you think of algorithms, but here it's much more about understanding the boundaries between a process and the environment it lives in.

Upcoming versions of both oxlint and oxformat will be more than twice as fast in projects with thousands of directories:

| What | Time |

|---|---|

| before | 6.1s |

| after | 2.8s |

Special thanks to Jovi DeCroock for PR'ing a quick fix for unblocking folks and Yuji Sugiura from the OXC team for refactoring the overall config handling. Original investigations: #22225 and #22250.